Embodied Robotics Innovation Platform (Enhanced) GX-MAT-09S

Engineering-grade embodied composite robot design kit, supporting the construction of 11 chassis + 7 robotic arms and over 80 composite robot configurations. Uses 12V encoded DC motors with a chassis load capacity up to 25kg. Control covers Arduino, STM32, and edge computing boards, supporting multi-level development and mainstream robot sensors like vision and LiDAR. Suitable for professional innovation training.

Applicable Audience/Scenarios

University robotics labs, research programs, and competition teams

Highlights

- 11 modular chassis + 7 manipulators → 88 hybrid robot builds

- Full-stack sensing: AI vision, speech, IMU, line tracking, lidar

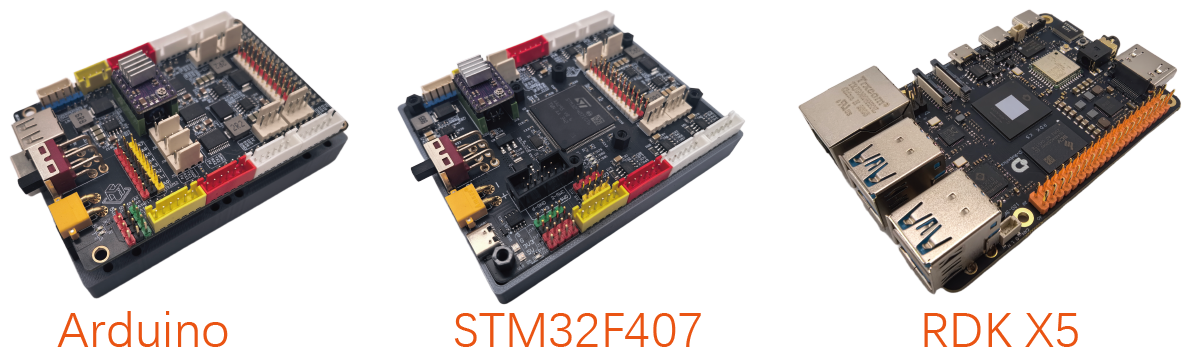

- Arduino + STM32 + Horizon RDK X5 (10 TOPS) controller stack

Product Features

Embodied System Deconstruction

Breaks down mobile hybrid robots into structure, drive, sensing, and control layers, revealing how embodied robots achieve perception–decision–action loops.

Modular Learning Path

Provides 11 chassis, 7 manipulators, and 88 composite forms so learners can practice design, assembly, calibration, and control across complete projects.

Full-Stack Perception

Integrates AI vision, monocular imaging, voice interaction, posture IMU, obstacle avoidance, line tracking, and navigation lidar to cover embodied sensing scenarios.

Multi-Layer Controller Stack

Arduino enables graphical/C++ entry, STM32 addresses professional MCU development, and Horizon RDK X5 (Ubuntu + ROS, 10 TOPS) powers advanced embodied applications.

Curriculum & Competition Coverage

Supports courses such as Mechanics, Sensors, MCU, Robotics, ROS, and Mobile Navigation, and aligns with national collegiate robotics innovation and engineering practice contests.

Lab Scenarios

The suite ships with canonical embodied chassis and manipulators so learners can rapidly assemble 88 hybrid robots spanning differential, holonomic, steering, and dual-arm systems.

Robot Chassis

Robotic Arm Configurations

Composite Robots

Configuration

Sensor Configuration

Delivers the sensing stack required by embodied robots, enabling perception, interaction, navigation, and line tracking within a single platform.

- AI vision camera

- Monocular imaging module

- AI speech recognition module

- Posture IMU sensor

- Obstacle avoidance / line tracking array

- Navigation-grade lidar

Controller Configuration

The three-tier controller stack combines Arduino (rich I/O with graphical/C++ entry), STM32F407 (professional MCU development and embedded engineering), and Horizon RDK X5 (Ubuntu + ROS, 10 TOPS edge AI for SLAM, vision, and embodied AI workloads), covering the full spectrum from classroom teaching to robotics research.

Software Configuration

Supplies Arduino IDE, STM32 toolchains, and Ubuntu/ROS environments with sample projects, supporting development from hardware drivers to AI/ROS applications.

Compatible with Arduino libraries, HAL/FreeRTOS, ROS/MoveIt, OpenCV, YOLO, speech SDKs, and other open ecosystems so coursework and research assets integrate quickly.

Experiments

The lab program spans microcontrollers, sensors, embedded Linux, computer vision, mobile chassis, manipulators, hybrid robots, ROS, and navigation, forming a complete learning path from entry to advanced projects.

Microcontroller Integration

Covers Arduino and STM32 from board familiarization to EEPROM access and library management.

- Arduino board familiarization:Understand chip specs, interfaces, memory, and circuit layout; configure the development environment.

- STM32 board familiarization:Review MCU performance, pins, circuitry, and toolchain setup.

- LED blinking:Use digitalWrite() and delay() to practice digital output control.

Motor Integration

Focuses on DC motors and servos, including encoder feedback and PID speed control.

- Controlling DC motors:Master digital drive methods for brushed DC motors.

- Controlling encoded DC motors:Capture encoder data, understand PID theory, and implement closed-loop speed control.

- Servo control:Operate servos with myservo.attach()/write() for precise positioning.

- Gyroscope Sensor:Use `MPU6050.cpp` to obtain posture data.

- Voice recognition sensor:Use `HBR640.h` to complete speech recognition and command triggering.

- AI Vision Sensor:Master camera video display and AI vision inference workflows.

Sensor Projects

Covers TTL, line tracking, ultrasonic, IMU, speech, and AI vision sensors.

- TTL sensor integration:Read sensor parameters and apply them in code.

- Four-channel line tracking:Implement autonomous line following.

- Ultrasonic ranging:Understand measurement formulas and adapt algorithms to real environments.

- Gyroscope sensing:Use MPU6050.cpp to obtain posture data.

- Speech recognition sensor:Trigger commands via HBR640.h speech recognition APIs.

Embedded Linux Projects

Uses Ubuntu + Python to practice GPIO, data processing, multithreading, and web communication.

- Color recognition:Install Ubuntu, manage SSH access, and practice file-system commands.

- Shape recognition:Use Python to drive LEDs and buttons with standard GPIO libraries.

- Sensor data acquisition:Collect, filter, and visualize data from multiple sensors via GUI.

- Networking & web services:Build socket communications and publish data with a simple web server.

- Multithreading:Apply Python threading for concurrent acquisition and processing with proper synchronization.

- Face Recognition:Use OpenCV/dlib for face detection, feature extraction, and recognition.

- Visual Line Following:Write vision algorithms to detect ground trajectories and realize visual line following.

- YOLO Deployment:Deploy a YOLO model for real-time object detection and classification.

- Dataset annotation:Use LabelImg/RectLabel to create and manage custom vision datasets.

- Fruit Recognition:Deploy deep learning models on RDK X5 for real-time fruit recognition.

- Robotic Arm Recognition and Handling:Combine visual recognition with robotic arm control to achieve automated picking and handling.

Computer Vision Projects

Leveraging RDK X5 and camera modules for color, shape, QR, tracking, detection, and dataset workflows.

- Color recognition:Create custom datasets with LabelImg/RectLabel to support training.

- Shape recognition:Create custom datasets with LabelImg/RectLabel to support training.

- QR code recognition:Create custom datasets with LabelImg/RectLabel to support training.

- Gimbal tracking of geometric shapes:Create custom datasets with LabelImg/RectLabel to support training.

- Robot tracking of colored targets:Create custom datasets with LabelImg/RectLabel to support training.

- Face recognition:Create custom datasets with LabelImg/RectLabel to support training.

- Vision-based line following:Create custom datasets with LabelImg/RectLabel to support training.

- YOLO deployment:Create custom datasets with LabelImg/RectLabel to support training.

- Dataset annotation:Create custom datasets with LabelImg/RectLabel to support training.

Mobile Chassis Projects

Covers assembly, drive control, and odometry for differential, holonomic, Foley, mecanum, and steering chassis.

- Tri-wheel differential chassis:Assembly, drive control, and odometry tuning.

- Four-wheel rear differential chassis:Assembly, drive control, and odometry tuning.

- Six-wheel differential chassis:Assembly, drive control, and odometry tuning.

- Tri-wheel Foley-wheel chassis:Assembly, drive control, and odometry tuning.

- Four-drive differential chassis:Assembly, drive control, and odometry tuning.

- Four-wheel Foley-wheel chassis:Assembly, drive control, and odometry tuning.

Manipulator Projects

From serial arms to SCARA and dual-arm systems, covering assembly and kinematics.

- 4-DOF serial manipulator:Assembly, drive control, and kinematic planning.

- 5-DOF serial manipulator:Assembly, drive control, and kinematic planning.

- 6-axis serial manipulator:Assembly, lift control, and coordinated kinematics.

- SCARA manipulator:Assembly, lift control, and coordinated kinematics.

- Dual-arm robot:Assembly, lift control, and coordinated kinematics.

- Elevating dual-arm robot:Assembly, lift control, and coordinated kinematics.

- Four-Wheel Steering Composite Robot:Three options: gimbal, four-axis, and SCARA.

Hybrid Robot Projects

Combine chassis and manipulators to build application-ready embodied robots.

- Tri-wheel differential hybrids:Gimbal, SCARA, and six-axis composite robots.

- Four-wheel differential hybrids:Gimbal, 4/5/6-axis, SCARA, dual-arm, and dual-arm lift variants.

- Six-wheel differential hybrids:Gimbal, 4/5/6-axis, SCARA, dual-arm, and dual-arm lift variants.

Robot Operating System (ROS)

Guides learners through ROS onboarding, package development, and MoveIt motion control.

- ROS quickstart:Explore file structure and control turtlesim and mobile robots via topics, services, and parameters.

- Building and porting ROS packages:Create packages, configure environments, and implement keyboard teleoperation.

- URDF models & MoveIt control:Build URDF models, visualize them in Rviz, and command manipulators with MoveIt.

- Map Building with Gmapping:Master the principles and configuration workflow to complete full-parameter tuning and map generation.

Knowledge Base

Get more technical documentation, tutorials, and FAQs about this product.

Key Questions

Q2: For university courses in ROS and mobile robot navigation, which products are most suitable and why?Embodied Robotics Innovation Platform (Enhanced) GX-MAT-09SPortable ROS Navigation Learning Platform UNI-WR2

Answer: The most suitable products for ROS and mobile robot navigation courses are the portable ROS navigation robot learning platform UNI-WR2 and the embodied robot innovation design platform (enhanced edition) GX-MAT-09S. Their core advantages are as follows:

UNI-WR2:

• Flexible deployment: ultra-portable (<13 cm, <550 g), enabling SLAM navigation on a tabletop as small as 60 cm × 60 cm without requiring a large site;

• Teaching depth: ROS engineering deployment is broken down into 5 steps (principles → demonstration → framework decomposition → package configuration → full-parameter tuning), combined with Cartographer, Hector, and Gmapping navigation methods to form progressive experiments;

GX-MAT-09S:

• Comprehensive functions: supports ROS courses, can assemble 11 chassis types plus 7 robotic arm configurations, and with the lidar module (range 0.12-8 m) covers mobile robot navigation and localization practice;

• Computing support: equipped with an RDK X5 mainboard (10 TOPS) and preinstalled Ubuntu + ROS, supporting the execution and tuning of complex algorithms such as SLAM mapping and autonomous obstacle avoidance.